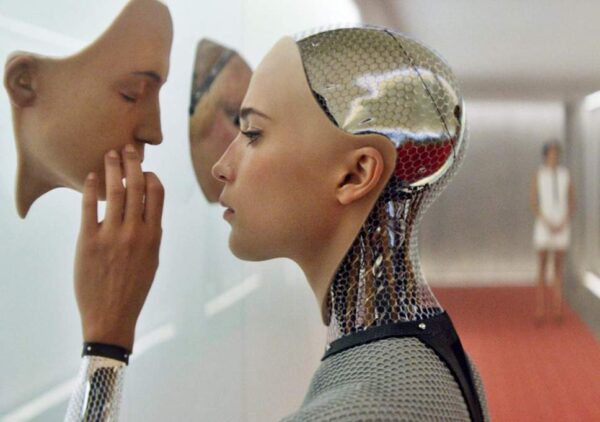

Should robots understand humans in order to work well with them?

3 min read

robots understand humans

Robots are automated machines that work to improve processes and productivity in any workplace. The machines, controlled by people, come with programs and sensors that allow them to work well. It may seem very inconceivable that a machine would understand a human that created it to work for him. As hard as it sounds, todays robots come with programmes that allow them to understand people and work with them better. Well not all robots but in some few years to come, we will probably see majority of them understanding humans more than they do today.

In a bid to get people and machines work well together, robot manufacturing companies like Universal Robots, introduced cobots to the manufacturing industries. Cobots have been around now for a while and they are now not only a prerequisite of the manufacturing industry but their deployment goes beyond to the retail, health, hospitality, marine, travel industries and even homes.

Cobots have direct interaction with humans and they are changing the face of workplaces. For this interaction to be harmonious and without any casualties, the two, that is machine and human, have to understand each other. The question is how do you make a cobot understand the human in order to improve communication between them?

How to make cobots and workers get closer

· Use cameras and sensors

In order to promote communication between cobots and humans, the use of cameras and sensors is important. Cameras and touch sensors accompanied by screens that display human like facial features make the machine and the human come closer together. In some restaurants, robots are serving as waiters among other duties. When a person asks a robot for any item on the menu, the robot will glance towards the item, which is a sign that that it emulates the humans and knows what it is doing. This move alone ensures a person that the robot is aware of the action it is about to take.

· Give them the ability to read minds

How does a machine het the ability to read minds? Some cobots can now read the human brain and wave or smile depending on the signals they get. One good example is Baxter, a creation of Daniela Rus and her MIT team. Baxter comes with an EEG (electroencephalography) decoding system that allows it to read signals. Baxter reads the signals from an attached set of electrodes on a humans scalp. The cobot then recognises the brain activity from error-related potentials, which are characteristic patterns in the brain.

· Teach them gesture recognition and imitation

The intention of cobots is to work seamlessly with humans. To make it even more worthwhile researchers are now focusing on making cobots and humans communicate even better by gestures. One good example is Walt, a cobot that works alongside humans at Audi Brussels. Walt utilizes deep learning to recognise the human’s gestures and communicate with them using the same gestures. Walt even learns new tasks by watching and imitating the workers. Imitating the workers saves the Audi company costs and time associated with programming cobots to take on new tasks.

What this also means is that to make Walt communicate better with the personnel does not require hiring a professional team to take Walt through the ropes. Though Walt is one of the few cases in the market today, this is a wave of revolution slowly taking shape in the world of advanced technology.

Wrapping it up

Cobots do not work for people but work with them. They are like other colleagues at the work place and one way of optimising production and processes in any work place is for colleagues to get along and understand each other. A cobot helps the human to perform duties that are heavier or riskier while he or she takes on the simpler duties. For some of these duties, a cobot still need to understand that it works with people and should consider the safety of the environment surrounding it at the workplace. By using photographic sensors, force sensors and cameras, they are able to move freely and avoid any collisions with people at their workplaces.